服务器安装的Ubuntu 22.04/24.04、k8s 安装的官方当前v1.35.3版本

一、规划

| 角色 | IP | hostname |

|---|---|---|

| Master1 | 192.167.8.20 | k8s-master-01 |

| Master2 | 192.167.8.21 | k8s-master-02 |

| Master3 | 192.167.8.22 | k8s-master-03 |

| Worker1 | 192.167.8.23 | k8s-node-01 |

| Worker2 | 192.167.8.24 | k8s-node-02 |

| Worker3 | 192.167.8.25 | k8s-node-03 |

| kube-vip | 192.167.8.26 | API VIP |

注意:所有节点必须在同一二层网络,kube-vip ARP 模式依赖二层广播;kube-vip 官方说明它可为控制面提供虚拟 IP,ARP 模式通过广播告诉网络 VIP 当前在哪个节点上。

磁盘规划

| 节点类型 | 系统盘建议 | 数据盘建议 | 原因 |

|---|---|---|---|

| Master / 控制节点 | SSD 必须/强烈建议 | 可少量 SSD | etcd、apiserver、containerd 都有频繁小 IO,机械盘容易卡顿 |

| Worker / 工作节点 | SSD 推荐 | 看业务决定 | 跑Pod、拉镜像、写日志、临时文件,SSD 体验明显好 |

| 存储节点 / 数据库节点 | SSD 优先 | 根据数据类型决定 | MySQL、PostgreSQL、Redis、MinIO、日志检索等建议 SSD |

| 普通静态文件/备份/NFS | 可用机械盘/ZFS | 机械盘可接受 | 适合容量型存储,不适合高频随机 IO |

下面假设网卡名是 ens18 你先在每台机器确认:

1 | ip -br addr |

如果不是 ens18,后面所有 INTERFACE=ens18 改成你的实际网卡,比如 eth0。

二、所有 6 台节点都执行

- 设置 hostname,分别执行对应命令。

1 | hostnamectl set-hostname k8s-master-01 |

其他节点改成:

1 | hostnamectl set-hostname k8s-master-02 |

- 写 hosts

所有节点都执行:

1 | cat >> /etc/hosts <<'EOF' |

- 关闭 swap,注释 /etc/fstab 里的 swap, 彻底关闭swap,防止重启恢复

1 | swapoff -a |

ps:Ubuntu 24 常见坑:cloud-init 自动重新创建 swap

1 | ls /etc/cloud/cloud.cfg.d/ |

- 加载内核模块

1 | cat >/etc/modules-load.d/k8s.conf <<'EOF' |

- 配置内核参数

1 | cat >/etc/sysctl.d/99-kubernetes-cri.conf <<'EOF' |

- 安装基础工具

1 | apt update |

时间同步

1 | apt install chrony -y |

三、所有节点安装 containerd

1 | apt install -y containerd |

修改 cgroup 为 systemd:

1 | sed -i 's/SystemdCgroup = false/SystemdCgroup = true/' /etc/containerd/config.toml |

四、所有节点安装 kubelet/kubeadm/kubectl v1.35.3

Kubernetes 新仓库是按小版本分仓库的,v1.35 要使用 core:/stable:/v1.35,官方也说明旧的 apt.kubernetes.io 已废弃冻结

1 | mkdir -p /etc/apt/keyrings |

确认能看到 1.35.3 后安装:

1 | apt install -y kubelet=1.35.3-* kubeadm=1.35.3-* kubectl=1.35.3-* |

如果这里提示找不到 1.35.3-*,先执行:

1 | apt-cache madison kubeadm |

把实际完整版本号贴出来,再按完整版本安装,如

1 | root@template:~# apt-cache madison kubeadm | head |

6 台节点都执行:

1 | apt install -y \ |

安装完成后检查版本:

1 | kubelet --version |

五、锁定 Kubernetes 版本

6 台节点都执行:

1 | apt-mark hold kubelet kubeadm kubectl |

以后执行:apt update 或者 apt upgrade不会自动把 Kubernetes 升级

六、配置 crictl,避免后面一直报警

6 台节点都执行:

1 | cat >/etc/crictl.yaml <<'EOF' |

如果能输出 containerd 信息,就ok

七、启动 kubelet

6 台节点都执行:

1 | systemctl enable kubelet |

这时 kubelet 可能是异常状态,正常,因为还没有 kubeadm init/join。

查看状态

1 | systemctl status kubelet --no-pager |

只要不是命令找不到即可。

八、确认初始化前基础状态

6 台节点都执行:

1 | #确认Swap是0 |

九、只在 master-01 配置 kube-vip

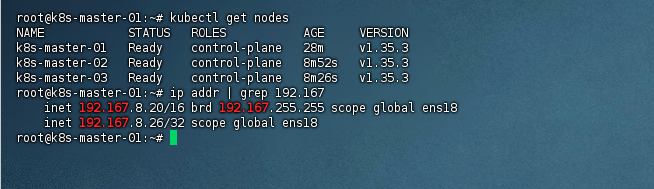

我的master-01是

1 | 192.167.8.20 |

下面以 ens18 举例:

1 | export VIP=192.167.8.26 |

生成 kube-vip 静态 Pod:

1 | mkdir -p /etc/kubernetes/manifests |

检查

1 | ls -l /etc/kubernetes/manifests/kube-vip.yaml |

十、只在 master-01 初始化集群

在 k8s-master-01 执行:

1 | cat >/root/kubeadm-config.yaml <<'EOF' |

初始化(如果卡住请看第十一步骤):

1 | kubeadm init --config=/root/kubeadm-config.yaml --upload-certs |

成功后会输出两种 join 命令:

一种是 master 加入用的:

1 | kubeadm join 192.167.8.26:6443 --token xxx \ |

一种是 worker 加入用的:

1 | kubeadm join 192.167.8.26:6443 --token xxx \ |

十一、如果init卡住,大概率是国内k8s镜像拉不下来

- 先中断 init ,Ctrl + C

- 然后执行清理:

1 | kubeadm reset -f |

3.修改 kubeadm-config.yaml,加入国内镜像仓库

1 | vi /root/kubeadm-config.yaml |

最终大概是这样:

1 | apiVersion: kubeadm.k8s.io/v1beta4 |

注意缩进,imageRepository 和 kubernetesVersion 同级。

4.先查看需要哪些镜像

1 | kubeadm config images list --config=/root/kubeadm-config.yaml |

- 手动预拉镜像

1 | kubeadm config images pull --config=/root/kubeadm-config.yaml |

如果能成功,再检查,能看到这些镜像后再 init。

1 | crictl images |

6、拷贝 kubeadm-config.yaml 到 master-02 跟 master-03

1 | scp kubeadm-config.yaml root@192.167.8.21:/root |

再回到 k8s-master-01 上执行 init

1 | kubeadm init --config=/root/kubeadm-config.yaml --upload-certs |

成功后会输出两种 join 命令,记住保存一下,如果失败,请看第十七步骤

十一、配置 kubectl

只在 master-01 执行:

1 | mkdir -p $HOME/.kube |

检查:

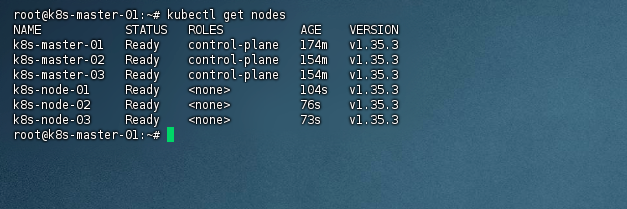

1 | kubectl get nodes |

十二、安装 Flannel 网络插件

master-01 执行:

1 | kubectl apply -f https://github.com/flannel-io/flannel/releases/latest/download/kube-flannel.yml |

十三、把 kube-vip 文件复制到 master-02/master-03

在 master-01 执行:

1 | scp /etc/kubernetes/manifests/kube-vip.yaml root@192.167.8.21:/etc/kubernetes/manifests/ |

如果目标目录不存在,先在 master-02/master-03 执行:

1 | mkdir -p /etc/kubernetes/manifests |

十四、master-02/master-03 加入集群

分别在 master-02、master-03 执行你保存的 master join 命令,例如:

1 | kubeadm join 192.167.8.26:6443 --token xxx \ |

十五、worker 节点加入集群

在 3 台 worker 上执行你保存的 worker join 命令,例如:

1 | kubeadm join 192.167.8.26:6443 --token xxx \ |

最终应该是:

1 | k8s-master-01 Ready control-plane |

十六、如果 token 过期

在 master-01 重新生成 worker join:

1 | kubeadm token create --print-join-command |

十七、初始化失败重置命令

失败节点执行:

1 | kubeadm reset -f |

先不要用 kube-vip,先让 init 成功,把 /root/kubeadm-config.yaml 里的

vip地址临时改成本机ip

1 | controlPlaneEndpoint: "192.167.8.26:6443" |

然后 init:

1 | kubeadm init --config=/root/kubeadm-config.yaml --upload-certs |

成功后配置 kubectl:

1 | mkdir -p ~/.kube |

init 成功后再部署 kube-vip

1 | cat >/root/kube-vip-rbac.yaml <<'EOF' |

启动kuvevip的rbac

1 | kubectl apply -f /root/kube-vip-rbac.yaml |

生成 kube-vip,注意修改成自己实际的ip地址

1 |

|

加 ServiceAccount:

1 | sed -i '/^spec:/a\ serviceAccountName: kube-vip' /etc/kubernetes/manifests/kube-vip.yaml |

验证 VIP

1 | sleep 20 |

成功之后 安装 CNI 网络插件

1 | kubectl apply -f https://github.com/flannel-io/flannel/releases/latest/download/kube-flannel.yml |

等 1 分钟检查:

1 | kubectl get pods -A -o wide |

如果国内拉不下来,先用这个查看具体卡在哪:

1 | kubectl get pods -A |

VIP 正常后改 kubeconfig

1 | grep server ~/.kube/config |

现在 kube-vip 已经正常接管 VIP 了,下一步要把集群配置里的 controlPlaneEndpoint 改回 VIP。

1 | grep controlPlaneEndpoint /root/kubeadm-config.yaml |

验证:

1 | grep server ~/.kube/config |

没问题之后加入 master节点

master02 + master03 配置

1 | mkdir -p /etc/kubernetes/manifests |

master-01 复制 kube-vip.yaml

1 | scp /etc/kubernetes/manifests/kube-vip.yaml root@192.167.8.21:/etc/kubernetes/manifests/ |

加入master控制节点

1 | kubeadm join 192.167.8.26:6443 \ |

其他work 节点加入,如 work01 - 03

1 | kubeadm join 192.167.8.26:6443 --token zjron3.xxx --discovery-token-ca-cert-hash sha256:xxx |